Be wary of your online "friends": Your anonymous chat buddy might be a sexual predator — or an artificially intelligent chatbot designed to trap pedophiles.

A new chatbot does just that. Its name is Negobot, but some are calling it "virtual Lolita" after the novel by Vladimir Nabokov because it poses as an emotionally vulnerable teenage girl and tries to trick online predators into giving away information that would help the authorities track down pedophiles.

Chatbots like Negobot use such processes as artificial intelligence, natural language processing and machine learning to react to users' specific comments and remember past conversations.

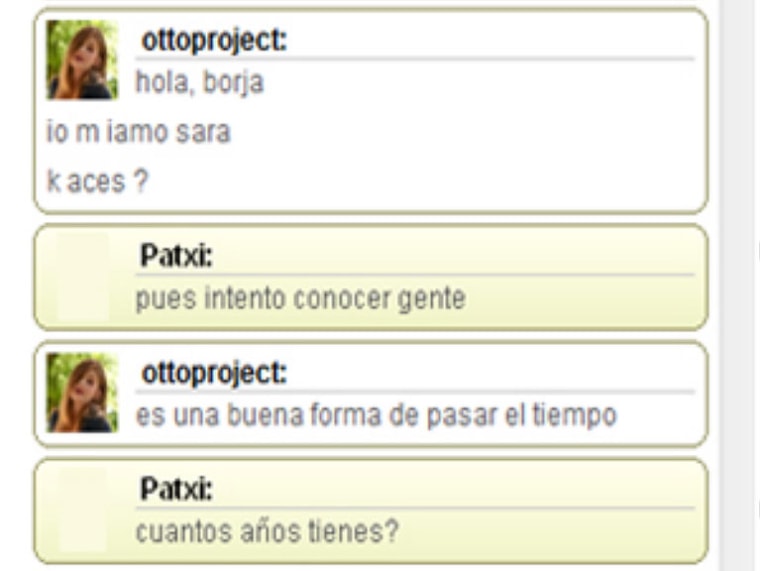

Using these methods, researchers at the University of Deusto in Spain were able to make Negobot sound like a stereotypical teenage girl, complete with slang, misspellings and pop-culture knowledge. [See also: Prove You're Human Online With New Language Test]

The bot — which can speak multiple languages thanks to translation technology — is also programmed to act in a manner that could be considered vulnerable, trusting and naïve.

But what makes Negobot unique — aside from its crime-fighting mission — is its use of game theory to trap potential pedophiles.

That means, essentially, that Negobot treats conversations as a game, with the objective of gathering as much potential evidence of pedophilic tendencies as possible.

Just like a game player, Negobot must obey certain rules, and has a specific set of "moves" it can make. For example, Negobot will always start a conversation in a "neutral" mode, talking only about general subjects like movies or music.

Participants chatting with Negobot can stay in this state, as long as they don't bring up sexual or otherwise disturbing content.

If they do turn the conversation toward innuendo, Negobot will switch to the "possibly [pedophile]" level, and begin sharing more personal information, like references to a troubled home life and a desire for companionship.

If the sexual content increases enough that Negobot registers the user as "allegedly pedophile," the bot's objectives change again: Now, its main goal is to keep the user in conversation for as long as possible. The bot will give users fake private information and try to lure them into a physical meeting — ironically, probably the exact same goal of the alleged pedophile.

Negobot has been field-tested in Google Chat and other online-conversation settings, and online security blog Naked Security said the bot "sounds like a promising start to address the alarming rate of child sexual abuse on the Internet."

However, some believe Negobot crosses the line from bait to hunter. Because Negobot "wins" by collecting as much incriminating information as possible, it tries to discourage its conversation partner from ending the chat.

If users express impatience with Negobot's persistence, the bot will play the victim, and try to guilt users into continuing the conversation because it's lonely and "looking for affection from somebody."

If users ignore Negobot, then the program will try to recapture their attention by eventually offering sexual favors in exchange for attention.

This could be considered entrapment or even harassment, and any potential criminal information gathered as a result of such "offers" is unlikely to hold up in court, John Carr, a specialist in online child safety, told the BBC.

"Undercover operations are extremely resource-intensive and delicate things to do," Carr said. "It's absolutely vital that you don't cross a line into entrapment, which will foil any potential prosecution."

The researchers, whose paper on Negobot is available online, haven't announced any plans to modify Negobot's aggressive pursuit protocols. However, they do plan to enhance the bot's performance by giving it more features, such as the ability to recognize irony, as well as continuing to monitor linguistic trends on the Internet in order to keep Negobot sounding young, girlish and vulnerable — the perfect digital Lolita.

Email jscharr@technewsdaily.com or follow her @JillScharr. Follow us @TechNewsDaily, on Facebookor on Google+.