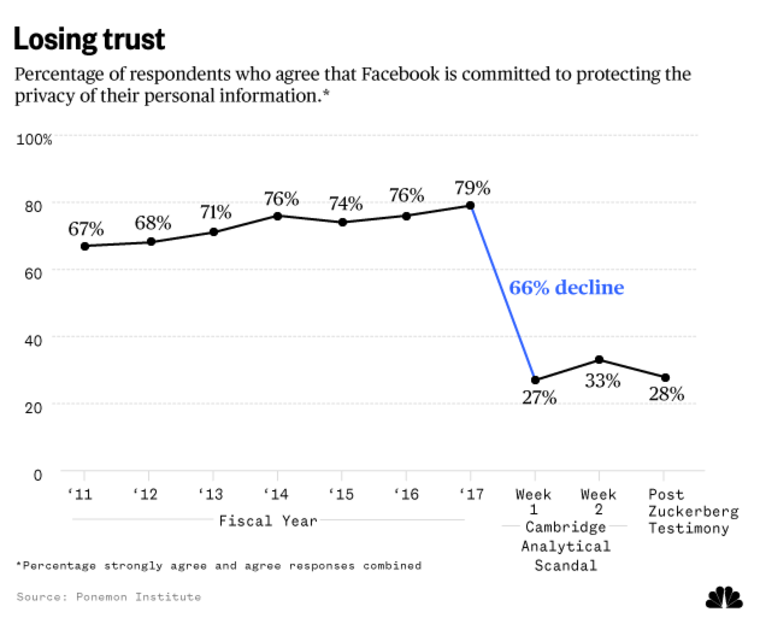

Facebook users' confidence in the company has plunged by 66 percent as a result of revelations that data analysis firm Cambridge Analytica inappropriately acquired data on tens of millions of Facebook users — and CEO Mark Zuckerberg’s public mea culpa during two days of congressional hearings last week did not change that, a new report reveals.

Only 28 percent of the Facebook users surveyed after Zuckerberg’s testimony last week believe the company is committed to privacy, down from a high of 79 percent just last year, according to a survey by the Ponemon Institute, an independent research firm specializing in privacy and data protection.

The institute’s chairman, Larry Ponemon, who has been tracking online privacy for more than 20 years, told NBC News he was “shocked” by the negative repercussions. He expected a decrease in trust, but not a 66 percent drop.

“We found that people care deeply about their privacy and when there is a mega data breach, as in the case of Facebook, people will express their concern. And some people will actually vote with their feet and leave,” Ponemon said.

Ponemon asked about 3,000 Facebook users how they felt about the statement “Facebook is committed to protecting the privacy of my personal information.” In 2011, 67 percent agreed. That grew to 79 percent in 2017.

But just one week after NBC News' U.K. partner ITN Channel 4 News dropped the Cambridge Analytica bombshell, confidence in Facebook dropped to 27 percent. It went up slightly (33 percent) the next week and then dipped to 28 percent after Zuckerberg’s highly publicized testimony on Capitol Hill.

Ponemon told NBC News he was not surprised that Zuckerberg’s performance before Congress did not move the needle upward.

“I don't care if he was the most eloquent, the smartest privacy guy in the world, there was no positive outcome that could have been achieved,” Ponemon said.

Other key findings

Most people who use social media realize their information is being collected and shared or sold. That’s Facebook’s business model.

“It is all about economics,” wrote one of the Ponemon survey respondents. “Facebook doesn’t see any value in protecting the privacy of its users.”

“It is foolish to believe Facebook or any other [social network] would be committed to protecting my privacy,” another said.

The majority of respondents made it clear that they want Facebook to tell them when something happens to their data. Remember, users only found out about the Cambridge Analytica breach, which took place in 2015, when it was reported by ITN Channel 4 News and written up in The New York Times.

The survey revealed that 67 percent believe Facebook has “an obligation” to protect them if their personal information is lost or stolen and 66 percent believe the company should compensate them if that happens.

Facebook users also expressed the desire to have more control over their data: Sixty-six percent say they have a right not to be tracked by Facebook, up from 55 percent before the breach. Sixty-five percent want the company to disclose how it uses the personal information it collects.

Facebook did not respond to a NBC News request for a response to the Ponemon survey.

In late March, Facebook announced steps to make its privacy policies more transparent. A central hub will make it easier for users to see their privacy settings and to find out what data they’re sharing and which companies are collecting it.

Will upset Facebook users pull the plug?

Nine percent of those surveyed by Ponemon said they had already stopped using Facebook. Another 31 percent said they were very likely/likely to stop or to use it less.

Will they actually do that? Probably not, Ponemon said. And other experts agree.

“Just because people say they're concerned about their privacy doesn't necessarily mean it will affect their behavior,” said Robert Blattberg, a professor of marketing at Carnegie Mellon University’s Tepper School of Business. “If you look at these kinds of events, people get all upset about it and then their behavior doesn't change very much.”

It really gets into the benefits of Facebook — which is ingrained in so many people’s lives — and if users see a viable alternative. Instagram may seem like a better choice, but it’s owned by Facebook.

“At first, I thought about closing my Facebook account, but quickly realized that starting anew with another [social network] would take lots of effort. Also, other company’s privacy practices are likely to be just like Facebook anyway,” wrote one of the survey respondents.

“I am very disappointed in Facebook, but I’m willing to give the company a second chance,” another said.

Even so, a small percentage change in the number of people who use Facebook — a drop of 3 or 4 percent — could “significantly impact their profitability,” Blattberg told NBC News.

Nuala O’Connor, president and CEO of the Center for Democracy & Technology, doesn’t think people should delete their Facebook accounts to send a message to the company.

“This is a major platform that is important to people for connection and community,” O’Connor said. “I think a more reasonable response is to change your privacy settings. I also think the onus is on Facebook to be more transparent.”

Is more government regulation needed?

In his appearances before Congress last week, Zuckerberg said he was open to regulations, telling lawmakers, "My position is not that there should be no regulation. I think the real question, as the internet becomes more important in people's lives, is what is the right regulation, not whether there should be or not."

Blattberg said legislation is “the biggest risk” Facebook faces as a result of the Cambridge Analytica fiasco. If users were required to opt in — to affirmatively give Facebook permission to have their data collected, shared or sold — it could disrupt the company’s business model. The impact would be felt by every online website and service that’s free to use for those willing to give up their privacy.

The Facebook users surveyed by Ponemon clearly see the need for government action. More than half (54 percent) said new rules are needed to protect privacy when accessing the internet.

For years, consumer advocates have called on Congress to pass strong online privacy regulations, but lawmakers have been unwilling to act. And few consumer advocates expect any meaningful legislation to come from a Congress focused on reducing regulations.

O’Connor, on the other hand, believes this may be “the watershed moment” that prods congressional action.

“I think that the time has come for this country to consider baseline, comprehensive data privacy legislation,” she said.

Herb Weisbaum is The ConsumerMan. Follow him on Facebook and Twitter or visit The ConsumerMan website.