Researchers have figured out how to read your mind and tell whether you are feeling sad, angry or disgusted – all by looking at a brain scan.

The experiment, using 10 acting students, showed people have remarkably similar brain activity when experiencing the same emotions. And a computer could predict how someone was feeling just by looking at the scan.

The findings could be used to help treat patients with various mental health conditions, and even provide a hard, medical diagnosis for emotional disorders. It might also be used to get a window into the minds of people with developmental disorders such as autism, the researchers at Carnegie Mellon University in Pittsburgh say.

And one big, immediate application – testing advertisements. “What emotion do you want to evoke with your ad for the latest BMW?” said psychology professor Marcel Just, who helped oversee the study.

"This research introduces a new method with potential to identify emotions without relying on people's ability to self-report," added Karim Kassam, assistant professor of social and decision sciences at CMU, who led the study. "It could be used to assess an individual's emotional response to almost any kind of stimulus, for example, a flag, a brand name or a political candidate."

It’s a small experiment -- fMRI is expensive and these types of studies cannot be easily done with large groups. And other labs will have to repeat it for it to become accepted science, but it starts to answer questions about how the brain experiences emotion.

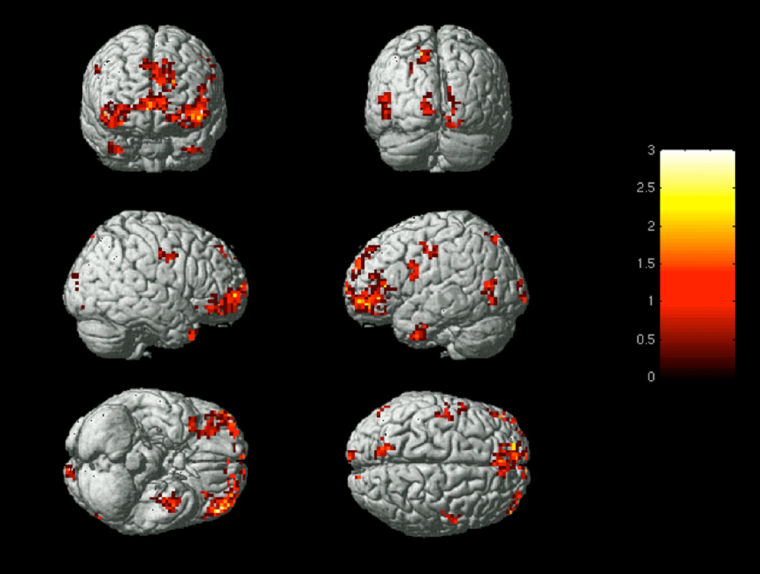

Kassam’s team enlisted acting students at Carnegie Mellon, asking them to induce nine emotional states: anger, disgust, envy, fear, happiness, lust, pride, sadness, and shame. Each was allowed to do this in whatever way he or she wished. Then they were put into a functional magnetic resonance imaging (fMRI) scanner, which took real-time images of their brain activation while they were shown words to bring them into each mood.

Each actor cycled through each mood six times. Then the researchers tested their computer program to see whether it could tell which emotion was bring conjured up, based on the brain scans.

“We said, okay, here are five times when this person experienced sadness,” psychologist Marcel Just, who helped oversee the experiment, told NBC News. “Then we showed it the sixth instance, and asked, which one it was. It could identify the emotion with something like 84 percent accuracy on average.”

And although 10 different people took part in the experiment, the brain activation patterns were similar.

“You can really tell which emotion one of these people is experiencing from their brain activation patterns,” Just says.

“You have no idea how I experience happiness. You imagine it must be similar,” Just adds. “But there has been no way to compare it, until now.”

The research, published in the Public Library of Science journal PloS ONE, is certain to be controversial. FMRI scans must be interpreted carefully, and many brain experts disagree how to do it. But scientists can tell many things by looking at the brain’s signals. In 2001, a team at the National Institute of Mental Health found they could tell whether a person was looking at a face, a house, or a chair by using fMRI scans.

An emotion is a lot fuzzier than looking at an object, however.

Just said the team tested their findings in several different ways. When they used one volunteer’s set of scans to “set” the computer, and then invoked an emotion in a different volunteer, the computer only accurately guessed the volunteer’s emotion 70 percent of the time.

“But still I think it is amazing that there is this universal human way of representing emotions in the brain,” Just says.

There is also the question of whether the fMRI and computer were seeing true emotion, or capturing the idea of anger, sadness and so on. So they tested that in a series of tests that the actors weren’t warned about. “We showed our subjects these pictures that evoke disgust universally,” Just said. “They were really revolting things having to do with insects and toilets and wounds.”

The computer was able to discern the true disgust that the unsuspecting acting students felt.

The experiment also showed four classifications of emotion – something called valence, which is whether an emotion is positive or negative; arousal – intense or mild; whether there is a social element, in which another person causes the emotion; and lust or sexual attraction.

“It tells you about the building blocks of thought,” Just says.

“You can imagine applying this technique to tell whether someone is autistic by the way their brain represents social interactions,” he added. The team is also trying to see if they can discover if someone is dangerously contemplating suicide.

Researchers have long known that facial expressions associated with many emotions are almost universal – anger, disgust, fear, happiness all look the same on most people’s faces, and people from one culture can tell what emotion another’s face is displaying. But this is a way to get into the brain itself, says Just.

“I think this provides a possible entirely new way to study psychiatric alterations of thought,” Just says.