As robots and other intelligent machines grow smarter and more capable, some experts worry about the day when their intelligence surpasses ours. But even without such “superintelligence,” robots are capable of pushing our emotional buttons — as a provocative new study makes abundantly clear.

A team of researchers at the University of Duisberg-Essen in Germany found that people who were asked to turn off a humanoid robot hesitated to do so if the bot pleaded to stay on. The participants’ reluctance demonstrates a telling quirk in our relationship with technology, the researchers said: People are prone to treat robots more like people than machines.

“We are preprogrammed to react socially,” said study co-author Nicole Krämer, a professor of social psychology at the university. “We have not yet learned to distinguish between human social cues and artificial entities who present social cues.”

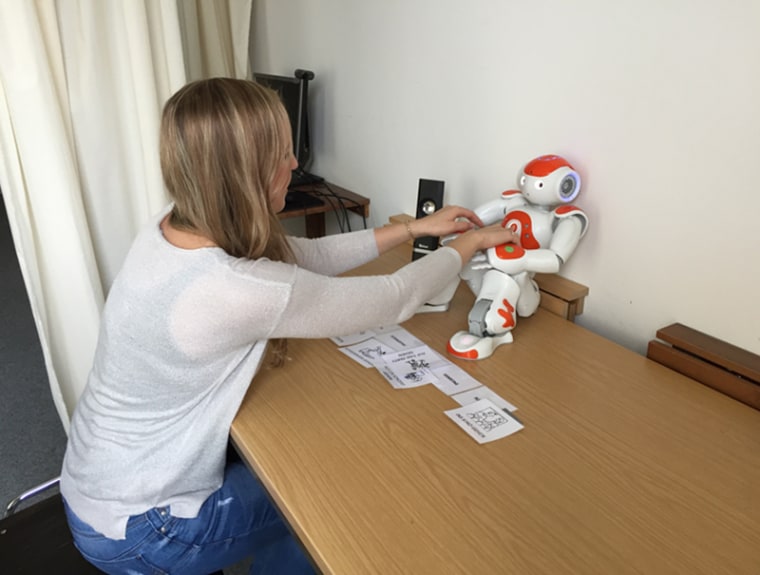

For the study, published July 31 in the journal Plos One, Krämer and her collaborators asked 89 people to interact with Nao, a cute, commercially available robot, and then turn it off. In some instances, the robot was personable, discussing pizza and its birthday. In others, it acted like an emotionless, talking appliance.

In both cases, the participants hesitated when the robot said: “No! Please do not switch me off!” — and some refused to turn Nao off altogether. Asked why they were reluctant to turn the robot off, one participant said, “Because Nao said he does not want to be switched off.” Another said, “I felt sorry for him.”

Those who worked with the more humanlike Nao found the decision particularly stressful. Surprisingly, however, people who interacted with Nao in its machine-like persona took longer to hit the switch — apparently, the researchers said, because the sudden show of simulated emotion was so jarring.

Robots capable of playing on our emotions are the future of artificial intelligence, according to Fritz Breithaupt, a humanities scholar and cognitive scientist at Indiana University in Bloomington. “These emotionally manipulative robots will soon read our emotions better than humans will,” he told NBC News MACH in an email. “This will allow them to exploit us. People will need to learn that robots are not neutral.”

Brian Scassellati, a professor of computer science, cognitive science and mechanical engineering at Yale, said socially adroit robots — like those being developed as companions to elderly people and tutors for children — would be able to influence our perceptions and behavior.

But he said he wasn’t concerned that such robots might manipulate us inappropriately. Advertising, he said, has long been manipulating us — and we have learned to accommodate to that.

“Advertising is designed to get my attention to make me think certain positive things about a particular product,” he said. “The idea that robots are doing this as well — that fact on its own isn’t something that should particularly worry us.”

Colin Allen, a professor of history and philosophy of science at the University of Pittsburgh, said in an email that he agrees with Scassellati’s comparison to advertising. But he urged caution nonetheless: “We should be concerned about how we can trust (and monitor) the corporations or other entities that might be tempted to exploit people’s tendencies, and at the same time how we can help to educate people.”

Scassalleti does worry about the pace at which new robotic technology is becoming available. “We are getting new systems that are out there without the time for careful study,” he said. Especially with regard to robots intended for children, he said, “this might have a longer-term effect that is not obvious from watching just one instance.”

WANT MORE STORIES ABOUT ROBOTS?

- Robot abuse is real, but maybe this little tortoise can help

- Why scientists are teaching this burly robot to hug

- This robot gets goosebumps when it’s happy