Everyone wants to take a better selfie — and this computer program, after sifting through 2 million of them, can tell you how. Andrej Karpathy, a Ph.D. student at Stanford, decided to investigate whether there were in fact any patterns in what exactly makes a selfie good — or at least popular.

To do so, he used a powerful "convolutional network," a system that quickly analyzes an image in dozens of ways and looks for patterns that help it sort that image into one of several categories it's been trained to recognize. It actually works a bit like the brain's visual system, though it's not nearly as good.

Related: Facebook Project Imitates Brain's Facial-Recognition Technique

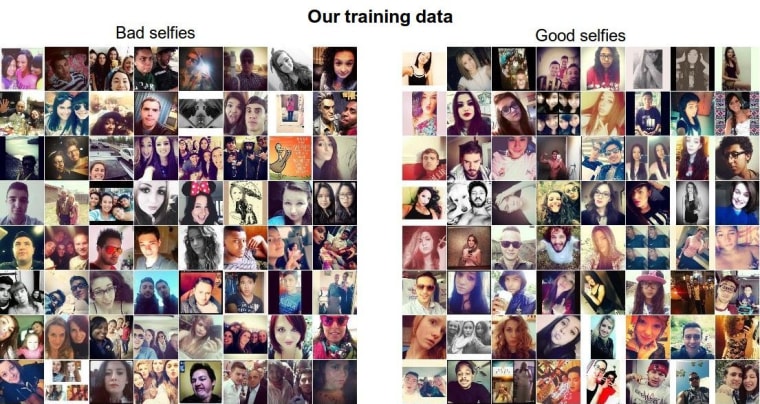

Karpathy harvested 5 million photos from the Web tagged #selfie, then winnowed that number down to 2 million that were suitable for rating. He then sorted the images roughly by popularity, and fed them into the computer, with the lower-rated half being considered "bad" and the upper-rated half "good." The computer examined every photo and came up with a set of computational rules that allowed it to sort photos into good and bad piles — or at least "more likely to be popular" and "less likely" piles.

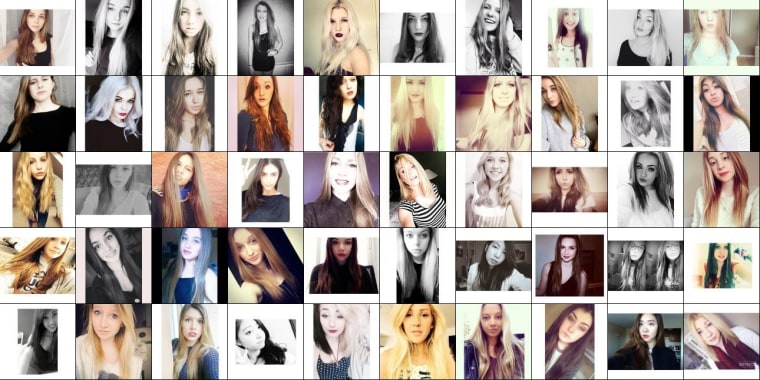

The computer's rules are based on pure data and image analysis — just patterns it noticed in the pixels that let it associate that particular image with either "good" or "bad." But the results when it was set loose on a new set of 50,000 selfies are surprisingly consistent. Here are the top 50, according to its ratings:

Notice a pattern? The program picked out almost all young white women, often with their heads tilted, taken from a certain distance, and often using a black and white or desaturated filter. Men (as seen much further down the "best" list) need to back it off a little, and apparently have a trendy haircut, if they want to attract views. Diversity is not valued highly, it seems, and in an email to NBC News Karpathy suggested this may be a result of the demographics of Instagram.

"If the majority of selfies were taken by white women they might end up slightly more favored in the results, since the model will spend extra effort on finding good features for those cases," he wrote.

Again, this says more about the relative popularity of certain types and compositions of photos online than about the actual quality of the photo, but it's an interesting bit of research that shows the versatility of neural networks and computer vision systems. You can read more about the process (and see way more selfies) at Karpathy's blog.

And if you're curious how your selfies stack up, if you mention Karpathy's @deepselfie Twitter bot in a tweet with an image, it'll tell you which pile it thinks your picture would end up in. Don't take it personally, though — after all, it's just a computer, right?