Research at MIT has produced a system that can intelligently pick human actions and movements out of videos. What makes it possible isn't some high-resolution sensor, but a clever way of applying language rules to images.

Let's say a computer's task is to identify, in a few minutes of video, a person making a sandwich. Of course the person must take out the bread, spread the mayo and add the ingredients, but perhaps not in that order. Do they slice the tomato first? Do they put the bread away in the middle? Such variance in human activity makes identifying such simple things difficult.

Hamed Pirsiavash, of MIT's Computer Science and Artificial Intelligence Laboratory, took a unique approach. By using algorithms normally applied to understanding human language, he was able to improve the quality of a system for understanding human actions.

You might say "he walked to the store" or "he went to the shop," but generally you can't avoid saying "he" or "to" when talking about a man going somewhere. Pirsiavash applied this logic to computer vision: Now the computer knows that whether a person does tomato or lettuce first, they always take out bread before spreading the mayo, and the second slice always happens last.

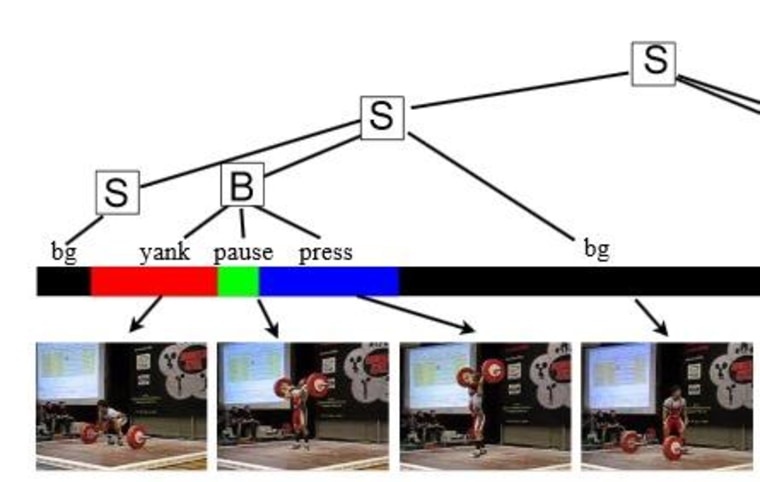

Using this technique, his system (after being "trained" on more structured video) was able to watch video of Olympic contestants and identify what event was shown, based on its individual portions: the run, release and throw of the javelin, for instance — even if certain portions are obscured or not pictured.

Such a system could be used to watch for actions on video feeds, from alerting medics if someone collapses to monitoring athletic training or physical rehabilitation.

Pirsiavash told NBC News in an email that the field of computer vision is blowing up as processors speed up and more data is made available — but if his research is any indication, it takes more than raw computing power to make sense of it.