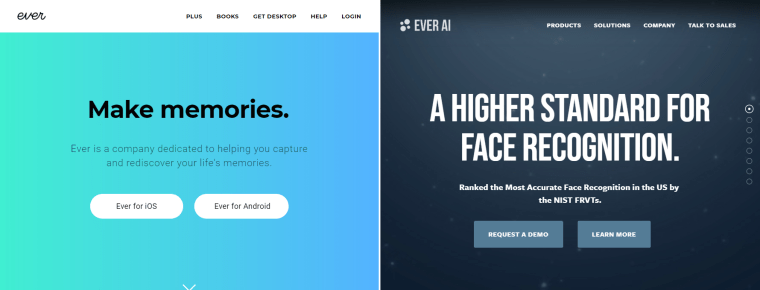

SAN FRANCISCO — “Make memories”: That’s the slogan on the website for the photo storage app Ever, accompanied by a cursive logo and an example album titled “Weekend with Grandpa.”

Everything about Ever’s branding is warm and fuzzy, about sharing your “best moments” while freeing up space on your phone.

What isn’t obvious on Ever’s website or app — except for a brief reference that was added to the privacy policy after NBC News reached out to the company in April — is that the photos people share are used to train the company’s facial recognition system, and that Ever then offers to sell that technology to private companies, law enforcement and the military.

In other words, what began in 2013 as another cloud storage app has pivoted toward a far more lucrative business known as Ever AI — without telling the app’s millions of users.

“This looks like an egregious violation of people’s privacy,” said Jacob Snow, a technology and civil liberties attorney at the American Civil Liberties Union of Northern California. “They are taking images of people’s families, photos from a private photo app, and using it to build surveillance technology. That’s hugely concerning.”

Doug Aley, Ever’s CEO, told NBC News that Ever AI does not share the photos or any identifying information about users with its facial recognition customers.

Rather, the billions of images are used to instruct an algorithm how to identify faces. Every time Ever users enable facial recognition on their photos to group together images of the same people, Ever’s facial recognition technology learns from the matches and trains itself. That knowledge, in turn, powers the company’s commercial facial recognition products.

Aley also said Ever is clear with users that facial recognition is part of the company’s mission, and noted that it is mentioned in the app’s privacy policy. (That policy was updated on April 15 with more disclosure of how the company uses its customers’ photos.)

There are many companies that offer facial recognition products and services, including Amazon, Microsoft and FaceFirst. Those companies all need access to enormous databases of photos to improve the accuracy of their matching technology. But while most facial recognition algorithms are trained on well-established, publicly circulating datasets — some of which have also faced criticism for taking people’s photos without their explicit consent — Ever is different in using its own customers’ photos to improve its commercial technology.

Facial recognition companies’ use of photos of unsuspecting people has raised growing concerns from privacy experts and civil rights advocates. They noted in interviews that millions of people are uploading and sharing photos and personal information online without realizing how the images could be used to develop surveillance products they may not support.

On Ever AI’s website, the company encourages public agencies to use Ever’s “technology to provide your citizens and law enforcement personnel with the highest degree of protection from crime, violence and injustice.”

The Ever AI website makes no mention of “best moments” snapshots. Instead, in news releases, it describes how the company possesses an “ever-expanding private global dataset of 13 billion photos and videos” from what the company said are tens of millions of users in 95 countries. Ever AI uses the photos to offer “best-in-class face recognition technology,” the company says, which can estimate emotion, ethnicity, gender and age. Aley confirmed in an interview that those photos come from the Ever app’s users.

Ever AI promises prospective military clients that it can “enhance surveillance capabilities” and “identify and act on threats.” It offers law enforcement the ability to identify faces in body-cam recordings or live video feeds.

So far, Ever AI has secured contracts only with private companies, including a deal announced last year with SoftBank Robotics, makers of the “Pepper” robot, a customer service robot designed to be used in hospitality and retail settings. Ever AI has not signed up any law enforcement, military, or national security agencies.

NBC News spoke to seven Ever users, and most said they were unaware their photos were being used to develop face-recognition technology.

Sarah Puchinsky-Roxey, 22, from Lemoore, California, used an expletive when told by phone of the company’s facial recognition business. “I was not aware of any facial recognition in the Ever app,” Roxey, a photographer, later emailed, noting that she had used the app for several years. “Which is kind of creepy since I have pictures of both my children on there as well as friends that have never consented to this type of thing.”

She said that she found the company’s practices to be “invasive” and has now deleted the app.

A NEW BUSINESS MODEL

Aley, who joined Ever in 2016, said in a phone interview that the company decided to explore facial recognition about two-and-a-half years ago when he and other company leaders realized that a free photo app with some small paid premium features “wasn’t going to be a venture-scale business.”

Aley said that having such a large “corpus” of over 13 billion images was incredibly valuable in developing a facial recognition system.

“If you are able to feed a system many millions of faces, that system is going to end up being better and more accurate on the other side of that,” he said.

An industry benchmarking test found last year that Ever AI’s facial recognition technology is 99.85 percent accurate at face matching.

When asked if the company could do a better job of explaining to Ever users that the app’s technology powers Ever AI, Aley said no.

“I think our privacy policy and terms of service are very clear and well articulated,” he added. “They don’t use any legalese.”

After NBC News asked the company in April if users had consented to their photos being used to train facial recognition software that could be sold to the police and the military, the company posted an updated privacy policy on the app’s website.

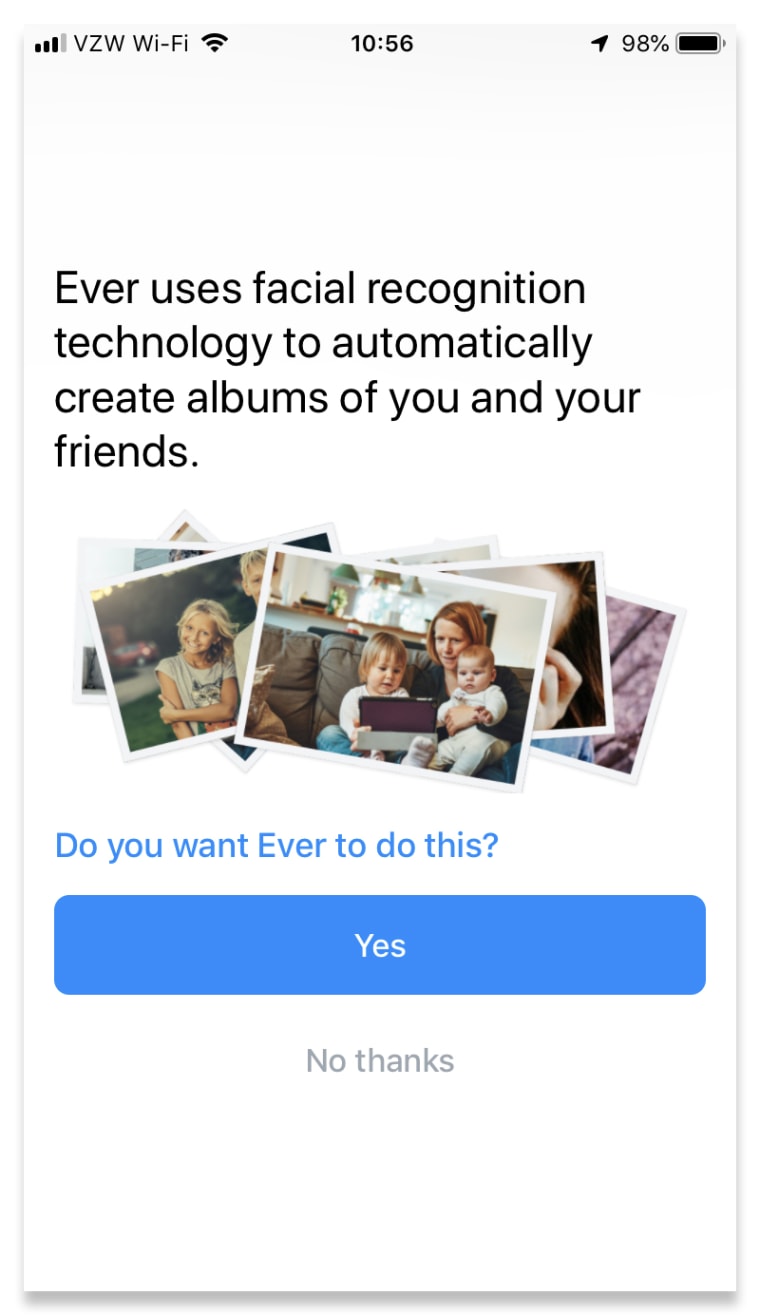

Previously, the privacy policy explained that facial recognition technology was used to help “organize your files and enable you to share them with the right people.” The app has an opt-in face-tagging feature much like Facebook that allows users to search for specific friends or family members who use the app.

In the previous privacy policy, the only indication that the photos would be used for another purpose was a single line: “Your files may be used to help improve and train our products and these technologies.”

On April 15, one week after NBC News first contacted Ever, the company added a sentence to explain what it meant by “our products.”

“Some of these technologies may be used in our separate products and services for enterprise customers, including our enterprise face recognition offerings, but your files and personal information will not be,” the policy now states.

In an email, Aley explained why the change was made.

“While our old policy we feel covered us and our consumers well, several recent stories (this is not a new story), and not NBC's contact, caused us to think further clarification would be helpful,” he wrote. “We will continue to make appropriate changes as this arena evolves and as we receive feedback, just as we have always done.”

Ever AI has recently been mentioned in Fortune and Inc.

Jason Schultz, a law professor at New York University, said Ever AI should do more to inform Ever app’s users about how their photos are being used. Burying such language in a 2,500-word privacy policy that most users do not read is insufficient, he said.

“They are commercially exploiting the likeness of people in the photos to train a product that is sold to the military and law enforcement,” he said. “The idea that users have given real consent of any kind is laughable.”

‘A HUGE INVASION OF PRIVACY’

Mariah Hall, 19, a Millsaps College sophomore in Jackson, Mississippi, has been using Ever for five years to store her photos and free up space on her phone.

When she learned from NBC News that her photos were being used to train facial recognition technology, she was shocked.

“The app developers were not clear about their intentions nor their use of my photos. It’s saddening because I believe it’s a huge invasion of privacy,” she wrote in an email.

“If a company uses their consumers’ information to partner with anyone — the police, the FBI — it should be one of the first things that is told to consumers before they download the app.”

Evie Mae, 18, from the United Kingdom, agreed. She said via Twitter direct message that the idea of her face being used to develop a commercial facial recognition product made her “uncomfortable” and that she would “definitely be more careful” about where she uploads her photos in the future.

When NBC News told Aley that some of Ever’s customers did not understand that their photos were being used to develop facial recognition technology that eventually could wind up in the government’s hands, he said he had never heard any complaints.

“We’re always open to feedback and if anybody does have a problem with it they can deal with it by one of two things: They can not be an Ever Album user anymore and they can also say that they want to be an Ever Album user but they would not like to have their photos used to train models. Those options are available to consumers today and always have been.”

After further correspondence with NBC News, Aley wrote on April 30 that the company had added a new pop-up feature to the app that gives users an easy way of opting out of having their images used in the app’s facial recognition tool. The pop-up does not mention that the facial recognition technology is being used beyond the app and marketed to private companies and law enforcement.

“That in-product feature has previously been available to users in certain geographic regions,” Aley wrote, “and we have now made it available to all Ever users globally, whether it is legally required or not.”

CORRECTION (May 10, 2019, 5:26 p.m. ET): An earlier version of this article misstated the timing of a $16 million investment in Ever. That investment was made in 2016, before Ever’s shift to a facial recognition business, not in 2017. A reference to the investment suggesting that the shift to facial recognition benefited the company financially has been removed from the article.