Facebook announced new policies on Thursday to reduce the visibility of vaccine misinformation on its platform, including rejecting advertising and excluding groups and pages from search results that spread “vaccine hoaxes.”

The announcement comes after weeks of criticism from public health advocates and lawmakers who have called for action to curtail inaccurate information about vaccines, which have led to a resurgence of childhood diseases that had effectively been eradicated.

“We are fully committed to the safety of our community and will continue to expand on this work,” wrote Monika Bickert, Facebook’s vice president of global policy management, in a blog post announcing the change.

Facebook announced it will reduce the ranking of groups and pages that spread misinformation about vaccinations in News Feed and its search function and will exclude them from recommendations or in search predictions. The policy will also extend to Instagram Explore and hashtag pages.

The anti-vaccination community is united by the unscientific theory that vaccinations are toxic and cause myriad illnesses, including autism, and misguidedly believe a conspiracy helmed by the government and the pharmaceutical industry is keeping the truth about vaccines from the public.

The movement has built an audience of hundreds of thousands on Facebook in a variety of groups and pages over the last decade, in part due to an internal search engine that included anti-vaccination content high in its list of results and a recommendation engine that often served up groups and pages based on conspiratorial and medically inaccurate information.

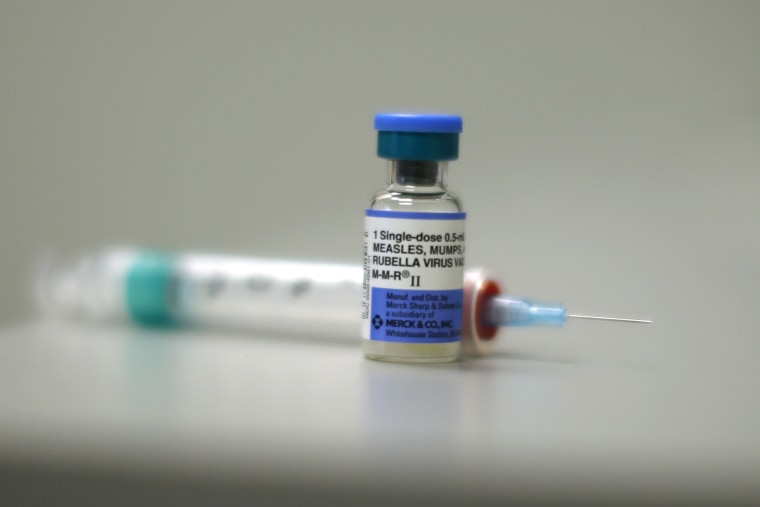

A large study released this week added to the scientific consensus that the measles vaccine does not increase the risk of or trigger autism.

Facebook said it would depend on the World Health Organization and the U.S. Centers for Disease Control and Prevention for their judgement on what constitutes "vaccine hoaxes."

Ads with vaccine misinformation will now be rejected, and advertisers will no longer be able to target people interested in “vaccine controversies,” the post said. Advertisers who continued to violate the policy could be disabled.

Facebook also said it would take steps to provide users with authoritative information on vaccines. Vaccine advocates have reported their messages being drowned out by the algorithm’s push of inaccurate information.

Much of the pressure on platforms from Capitol Hill came by way of Rep. Adam Schiff, D-Calif., who wrote letters to Facebook, Google and Amazon expressing concern for the public health danger posed by the anti-vaccine content on their platforms.

“I’m pleased that all three companies are taking this issue seriously and acknowledged their responsibility to provide quality health information to their users.” Schiff said in a statement following Facebook's post. “The crucial test will be whether the steps outlined by Google and Facebook do in fact reduce the spread of anti-vaccine content on their platforms, thereby making it less likely to reach users who are simply seeking quality, fact-based health information for their children and families."

Facebook’s actions add to a series of moves by other tech companies to address vaccine misinformation on their platforms. Pinterest has been blocking all vaccine-related search results since December. YouTube removed ads from videos containing anti-vaccination content last month. Facebook’s announcement — in the works since last month — comes two days after an Ohio teenager who got vaccinated against his family’s wishes testified before Congress that his mother had gotten most of her misinformed ideas about vaccines from social media sites like Facebook.

The prevalence of such misinformation correlated with an increasing number of parents delaying or outright rejecting vaccines, leading to an uptick of vaccine-preventable diseases. A current measles outbreak in the Pacific Northwest has infected 71 people — mostly unvaccinated children.