Reddit made headlines this week for banning 2,000 subreddits under new rules that ban certain violent and hateful content. While the vast majority of the banned subreddits were small or inactive, the list included r/The_Donald, a pro-Trump community; r/gendercritical, a subreddit where transphobic commentary has thrived; and r/ChapoTrapHouse, the subreddit associated with the left-wing podcast.

All of these actions represent a sea change in how platforms apply their policies. But what, exactly, prompted the decisions?

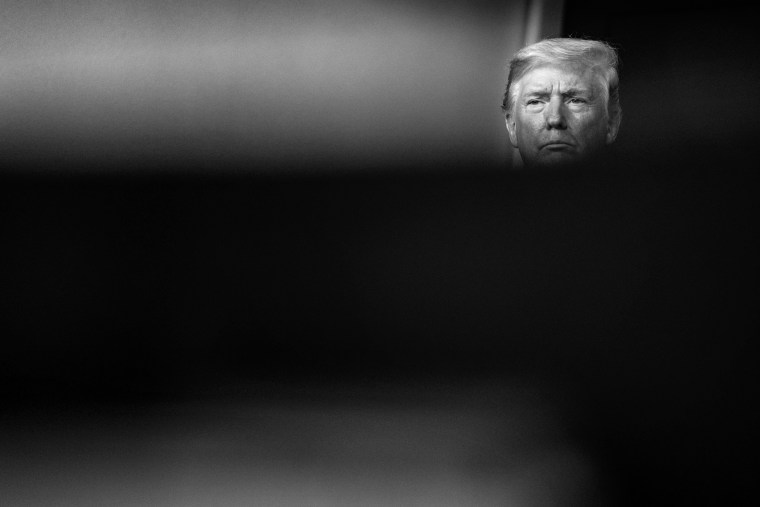

The ban comes amidst a wave of similar actions across Silicon Valley platforms. Within the last week, YouTube, Facebook and Twitter have all taken action against right-wing figures for violating hate speech policies, while popular streaming platform Twitch temporarily banned President Donald Trump himself.

All of these actions represent a sea change in how platforms apply their policies. But what, exactly, prompted the decisions? After all, people have been complaining about this kind of speech for years.

When it comes to Facebook, the answer is obvious: An advertiser boycott caused the company a $7 billion loss, demonstrating that money talks. But Reddit’s decision to ban major subreddits was not a mere shift in policy application, but a change in policy altogether. For many years, the company took a hands-off approach to content moderation, leaving decisions up to individual, volunteer subreddit moderators. Then, last year, Reddit “quarantined” r/The_Donald, making it difficult for new users of the platform to find or participate in the forum.

Unlike Twitter and Facebook and many other large platforms, Reddit doesn’t employ an army of commercial content moderators whose job it is to enforce company policy by deleting posts that violate the rules. Instead, individuals who volunteer to moderate subreddits — which often come with their own, more specific or restrictive policies — would presumably have to enforce Reddit’s policies.

A lung cancer subreddit, for example, might choose to forbid posts about alternative treatments, or a community of birdwatchers might simply ban commentary about politics. Members of a subreddit are expected to read the rules, typically posted at the top of the page, upon joining and if they violate them, are generally aware of the consequences they’ll face.

And content moderation is hard. In nearly a decade of studying it, I’ve seen countless mistakes, some of which had a serious impact on the users involved. Mark Zuckerberg once wrote that moderators at Facebook “make the wrong call in more than one out of every 10 cases” (bold is mine). Reddit’s CEO wrote recently that “a community-led approach is the only way to scale moderation online.”

And content moderation is hard. In nearly a decade of studying it, I’ve seen countless mistakes, some of which had a serious impact on the users involved.

I used to be wholeheartedly in favor of unmoderated platforms, but I also never imagined that the president of my country would use a corporate platform to spread untruths, and hatred toward vulnerable groups. That platforms have the ability to set their own rules under the law now feels exigent.

Still, just as we are rightly wary of allowing the state to censor, we should also be wary of placing our trust in a platform to get moderation right. And to be clear, “wrong” can mean one of several things: Just as companies are criticized for not taking down enough, they must also face scrutiny for their mistakes. YouTube recently took down several white supremacists, but it also regularly removes evidence of war crimes in Syria. Facebook removed accounts associated with the violent “boogaloo” movement — but it also removed a number of prominent Tunisian activists last month.

Dr. Joan Donovan, research director at Harvard’s Shorenstein Center, studies the way platforms are used for manipulation and extremism. “We can’t just think of Reddit as a place for speech,” she says, “We must understand it’s full capacity for sharing ideas, connecting groups, and coordinating action. Some communities on Reddit form a hive, where groups cook up hoaxes and swarm their targets. That kind of coordinated brigading isn’t about free speech, it’s about power. And like all this about power, we need rules of engagement or else folks with bad intentions take over.”

Incidentally, Reddit’s decision came down at the same time that Parler, a new platform billed by conservatives as a “free speech app,” is making headlines for banning users. As it turns out, Parler has relatively restrictive community standards, which prohibit a number of things that are perfectly legal in the U.S. (contradicting the company’s own Terms of Service, which state that the company “endeavors to allow all free speech that is lawful”).

Both Parler’s standards and Reddit’s new policies represent particular windows of acceptable speech: On the right, pornography is verboten; on the left, hate is unacceptable.

It’s important to note that last year, Reddit came out ahead of other platforms in a ranking of content moderation practices put together by the Electronic Frontier Foundation (where I work), making them the only major platform to fully implement the Santa Clara Principles, a baseline set of standards on transparency and accountability.

That’s why I’m not too worried about Reddit’s new rules, as long as the company can manage to stay true to its community roots, even as it grows beyond its old values.