When all the humans went home for the day, a personal-assistant robot under development in a university lab recently built digital images of a pineapple and a bag of bagels that were inadvertently left on a table – and figured out how it could lift them.

The researchers didn't even know the objects were in the room.

Instead of being frightened at their robot's independent streak, the researchers point to the feat as a highlight in their quest to build machines that can fetch items and microwave meals for people who have limited mobility or are, ahem, too busy with other chores.

Manually loading digital images of every relevant object into a personal-assistant robot, as it is currently done, takes too much time, explained Siddhartha Srinivasa, who directs the Personal Robotics Lab at Carnegie Mellon University.

Instead, he and colleagues want their robot to learn to recognize objects all by itself.

"Humans do it naturally: We look at a scene and can immediately understand it, identifying objects and things in it," Srinivasa explained in an email to NBC News.

"A robot with this ability will be able to interact semantically with the world. It will then also be able to interact better with us because it is able to have a common semantic model of the world with us."

The goal, in a sense, is to teach robots to behave like human babies. For example, to learn about rubber ducks, babies don't just look at them and go "Aha, that's a duck." Rather, they pick up the duck, squeeze it, bang it against the tub, and put it in their mouths – in the process, building a mental image of a duck.

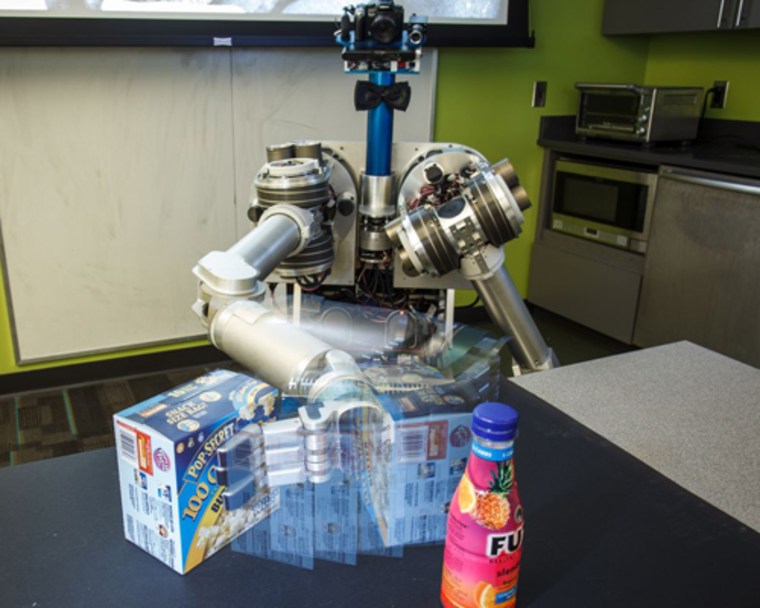

The robot, a two-armed machine called HERB (the Home Exploring Robot Butler), uses color video, a Kinect depth camera and non-visual information to build digital images of objects, which for its purposes are defined as something it can lift.

The depth camera is particularly useful, as it provides three-dimensional shape data. Other information HERB collects include the object's location – on the floor, on a table, or in a cupboard. It can determine if it moves, and whether it is in a particular place at a particular time – say, mail in a mail slot.

The video below illustrates how the process, called Lifelong Robotic Object Discovery (LROD), works.

At first, everything in the video lights up as a potential object, but as HERB uses the information it gathers – domain knowledge – to discriminate what is and isn't an object, the objects themselves become clearer, allowing the robot to build digital models of them.

Srinivasa and colleagues found that adding domain knowledge to the video input nearly tripled the number of objects HERB could discover, and reduced computer processing time by a factor of 190.

Now, HERB needs to learn what to call all these objects stored in its memory. "Right now, all HERB knows are models of objects. It has no idea what the actual semantic label is," Srinivasa said.

To overcome the hurdle, the team is considering using Amazon's crowdsourcing marketplace Mechanical Turk "to get humans to label the models, or learning from semantic databases like ImageNet or WordNet," he added.

Srinivasa is presenting his team's latest results Wednesday at the IEEE International Conference on Robotics and Automation in Karlsruhe, Germany.

John Roach is a contributing writer for NBC News. To learn more about him, visit his website.