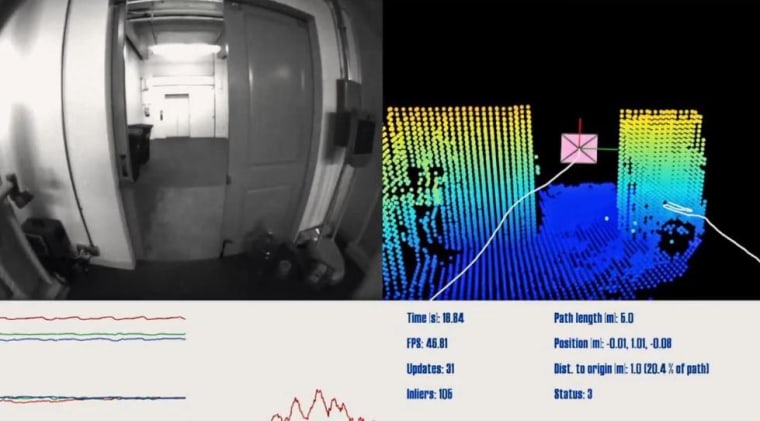

Google is tapping big brains around the world to try to enable our devices to see and understand surroundings the way humans do, in an effort it calls Project Tango. The first prototype phone uses a Kinect-like camera and sensors galore to instantly map its position and the world around it, thousands of times per second.

The world of our current phones is very disconnected from the real world, it seems — the icons and text boxes we interact with daily overlap little with the three-dimensional hallways and rooms we occupy. Project Tango intends to change that by giving the phones a direct line to the shape of the real world.

What if your phone knew whether it was in the kitchen or the living room by the shape of it or the way sound reverberated, and changed the TV's volume accordingly? What if when it sensed your college lecture hall, it automatically silenced itself? What if you could play virtual tag with Mario by running around the park? These things and more could be enabled by a device that senses its surroundings instantly and with great fidelity. This video explains a few technical aspects of the system.

We were thoroughly creeped out, in a good way, by the detail replicated in 3-D by the Xbox One's new Kinect — and putting something like that in a handheld device is an idea that could produce some very interesting results.

To that end, Google is soliciting developers to build games and apps for the system, and has 200 prototypes to lend out to the most interesting proposals. You can sign up for the chance to do it here; those developers Google senses have the most potential will get their Tango in a month.