Scientists have found ways to send messages from brain to brain, and developed methods to scan a person's gray matter and sketch an image of their thoughts. It's only a matter until they can read your mind, right?

Don't worry. While great advances in understanding the brain are being made, no one's going to be taking a sci-fi worthy peek inside your cranium anytime soon.

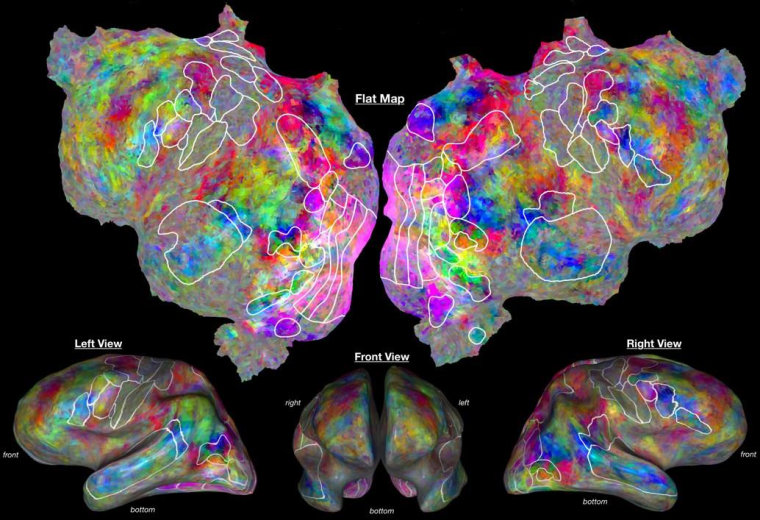

The emerging field of brain decoding is the science of taking scans of brain activity and reconstructing what the person was looking at or thinking about. Strap someone into an MRI, show them images or videos, then pore over the data and attempt to figure out where and how the brain stores and processes those images. Over the last decade, researchers around the world have successfully reconstructed faces and images from dreams, and are working on words and intentions.

Combined with reports of brain-to-brain interfaces and mind-controlled roaches, it's enough to make anyone reach for their tinfoil hat. The truth, however, is significantly less alarming.

"Brain decoding is pretty much just a laboratory trick," said Jack Gallant, professor of psychology and neuroscience at the University of California, Berkeley, in a phone interview with NBC News. "There's no good brain decoding that you can do for humans that could be widely disseminated currently."

Gallant isn't some naysayer — his lab is doing some of the most advanced work in the field. But he's also realistic about the limitations of this type of work — limitations that somehow tend to get left out of breathless reports that read straight out of science fiction. The fact is, he says, we're still largely in the dark about what's going on in the human brain.

"There's 20 billion neurons in there, and they're all doing their own little job. It's a huge amount of information," he says. "With any method we have of measuring the brain right now... the most ridiculously optimistic estimate says you can decode one one-millionth of the information in the brain at any given time."

20th-century tech, 21st-century math

So if it's impossible to tell what each neuron is doing with today's tools, where did these results come from? Believe it or not, researchers have found a way to tell what's going on in parts of the brain without actually knowing for sure what each part does.

"We don't really know what the neural networks are doing. It's a bit of a black box," Marvin Chun, professor of psychology at Yale University, told NBC News. His research, like Gallant's, focuses on how the brain's visual areas recognize faces, and is similarly limited by the data they can capture. The real advances, they both say, have been in the way we interpret that data.

"Most of the revolutionary work in this area has been computational, in algorithms especially," said Chun.

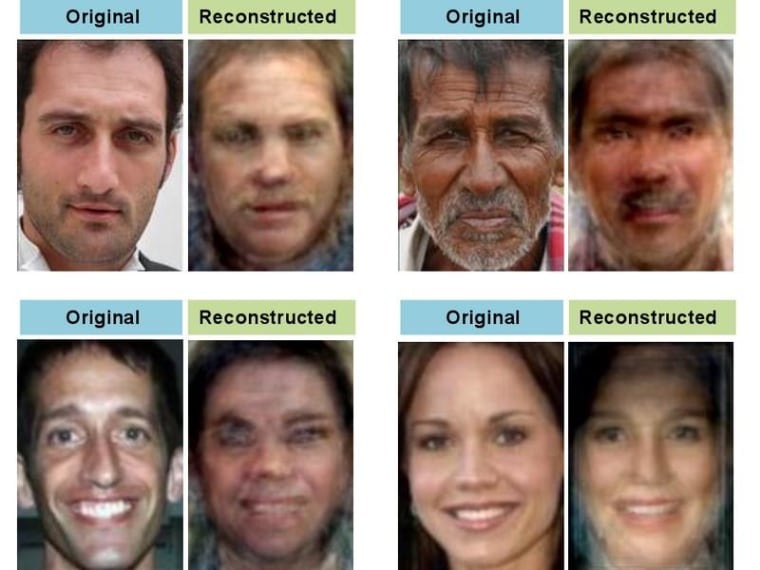

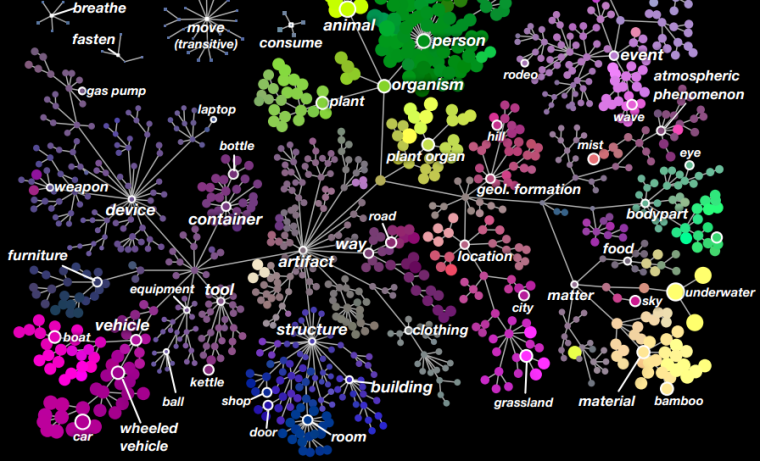

These algorithms, mathematical processes created to make sense of the brain's activity, don't see noses and eyes and hair color. But since the brain responds differently to different visual stimuli, the computer can start to associate certain brain states with certain types of imagery. Once it gets enough data, the computer can actually make a reasonable guess at what you're seeing.

"Your face and my face are different, and mathematically they will be different," he says, "So what the fMRI is picking up is the mathematical difference, and it has the ability to reconvert that into an image — which is what makes our study pretty cool."

Don't get the wrong idea, though — this cutting-edge research only reveals most basic concepts. It's the neurological equivalent of a baby associating the word "ball" with round things.

"We're just at the very beginning of building these types of models," said Gallant. "A lot of parts of the brain, we don't have a good idea of what it's doing. Most of the brain."

As for decoding complex processes like emotions or conversations, experts declined to even make predictions.

"Not only are we not close," joked Gallant, "no one even has any idea how close we are."

Wishful thinking

Other recent experiments also make it sound like the mind is an open book, ready to be read — or written on. Researchers at the University of Washington recently tested a "brain-to-brain" connection, where one person's thoughts moved another's finger. And a North Carolina State team created cockroach "biobots," implanted with electrodes, whose movements can be guided by their carapace-mounted sensors.

But the brain-to-brain connection, despite being a first, is more like a patchwork of known techniques: scalp-mounted sensors have been able to monitor for specific activity patterns for decades, and stimulating a part of the brain to make a corresponding part of the body twitch is similarly well-established. Writing a program to connect the two is the start of something big, but for now it's more parlor trick than quantum leap.

And cockroach brains are rather less complicated than our own, to say nothing of the highly invasive, brute-force methods used to direct the creatures' movement. The FDA won't be approving anything like that, and even conspiracy theorists would find it a stretch to trick people into putting on a helmet filled with conductive needles. Even non-invasive methods would be difficult to abuse, said Chun: "You have to put people in this huge, loud scanner. It's not a very convenient machine to use."

As for consumer devices that claim to read thoughts or emotions through your scalp or by other means, experts say they're about as accurate as mood rings.

Your secret thoughts, then, are safe for the time being. But will pocket brain-readers and mind-control guns ever bring tinfoil hats (which may actually thwart such devices) back into style? Perhaps eventually, but don't hold your breath.

"I think it's a matter of time," said Gallant, "but is that a lifetime, or geological time? I don't know."