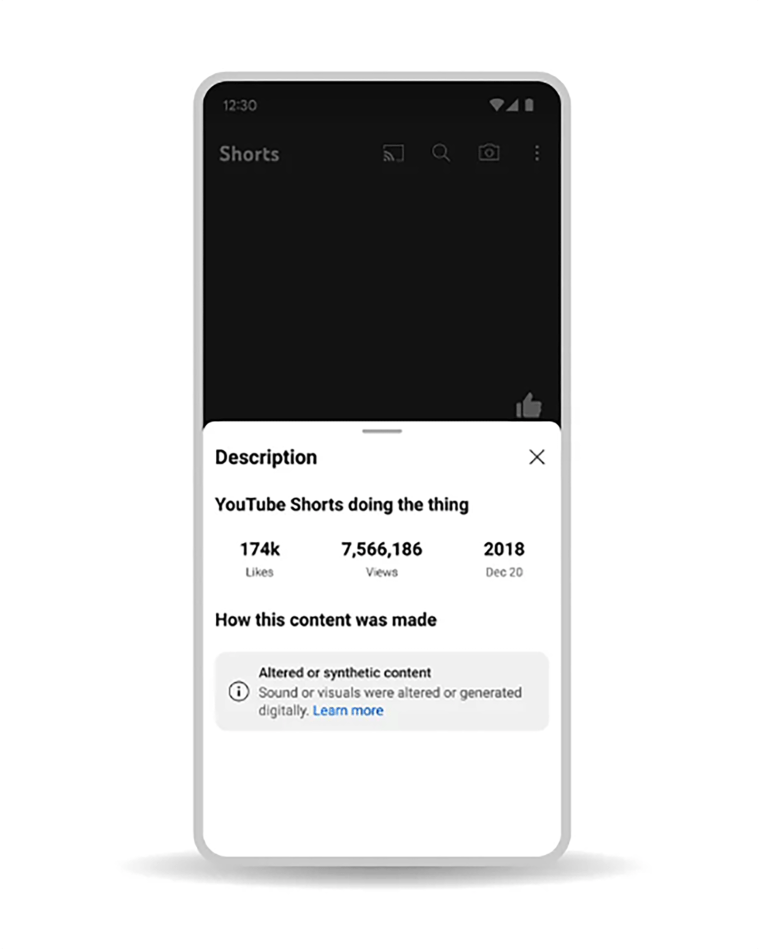

YouTube will begin to require creators to label AI-generated content on its platform in the coming months, and the platform will tell users when they’re watching content created using artificial intelligence, the company said in a blog post Tuesday.

YouTube will also allow people to request the removal of manipulated video “that simulates an identifiable individual, including their face or voice.”

“Not all content will be removed from YouTube, and we’ll consider a variety of factors when evaluating these requests,” the company said in the blog post. “This could include whether the content is parody or satire, whether the person making the request can be uniquely identified, or whether it features a public official or well-known individual, in which case there may be a higher bar.”

YouTube will have a similar request removal process for its music partners when an artist’s voice is mimicked for AI-generated music. These requests will be available to labels or distributors who represent artists in YouTube’s “early AI music experiments” first, then it will be expanded to additional artist representatives.

But YouTube is also expanding its own use of AI in content creation and moderation.

“One clear area of impact has been in identifying novel forms of abuse,” the company said. “When new threats emerge, our systems have relatively little context to understand and identify them at scale. But generative AI helps us rapidly expand the set of information our AI classifiers are trained on, meaning we’re able to identify and catch this content much more quickly. Improved speed and accuracy of our systems also allows us to reduce the amount of harmful content human reviewers are exposed to.”

In late 2020, YouTube said that it faced challenges when relying more heavily on automation in its moderation processes and that the AI made more mistakes than human reviewers.

AI content on YouTube falls under the same Community Guidelines that already prohibit “technically manipulated content” that misleads viewers and could harm others.

Some of the videos, particularly ones that cover “sensitive topics” like “elections, ongoing conflicts and public health crises, or public officials” will have a label visible in the video player itself.

YouTube wrote that the policy would cover an AI video that “realistically depicts an event that never happened, or content showing someone saying or doing something they didn’t actually do.”

AI technology capable of creating realistic videos, sometimes called deepfakes, has improved rapidly and become more accessible in the past several years, becoming available to anyone who downloads an app. Almost anyone can quickly create manipulated media, and more sophisticated methods can produce realistic fake depictions of recognizable people saying and doing things that didn’t actually happen. The most common use of this technology is to depict women in nonconsensual pornographic videos.