Americans who want to be ready for the next Russian attack can just read an old newspaper.

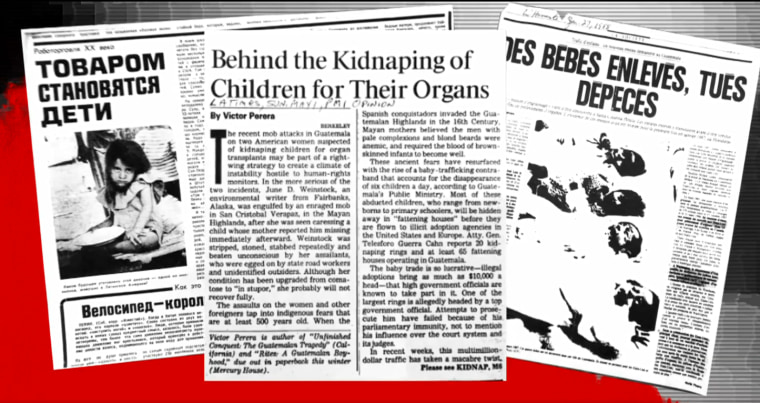

During the Cold War, hundreds of bogus headlines around the world appeared: The U.S. invented AIDS. Wealthy Americans were adopting children to harvest their organs. If these sound like the kind of conspiracies pushed by Russian trolls during the 2016 election, there’s a good reason: They were once promulgated by Russian or Soviet agents.

Russia has a century-old playbook for “disinformation,” historians and former intelligence officers say, recycling tactics and narratives, and giving clues to detect their next information-warfare attack on our elections.

“I believe in Russia they do have their own manual that essentially prescribes what to do,” said Clint Watts, a research fellow at the Foreign Policy Research Institute and a former FBI agent.

“The main difference is the technology available to them,” said Todd Leventhal, a retired senior counter-disinformation officer at the State Department. “The methodology is the same.”

And whether or not you believe the campaign changed a single vote in 2016, “nobody likes to be manipulated,” he said.

Past is prologue

Governments across the ages have used “disinformation,” deliberately spreading false stories to deceive, often as part of military feints and maneuvers.

“Disinformation is just lies,” said Kara Swisher, editor of Recode, a tech-news website. “It's putting out false information to confuse, really, and distract and to make you question real information.”

But the Soviets under Joseph Stalin elevated “dezinformatsiya” to its own government agency, aggressively spreading lies against their political opponents and misleading citizens with bogus propaganda on a mass scale. The practice in Russia dates at least back to the 1880’s, when it was deployed by the Tsarist secret police, the KGB’s predecessor.

“The Soviets were notorious... for featuring any kind of tension between races in the United States to suggest that this is a highly discriminatory society,” said Kathleen Hall Jamieson, a University of Pennsylvania communications professor.

“Don't look to the United States as this ‘shining city sitting on a hill,’” she said, describing the Soviet attitude, “but actually as this bunch of hypocrites who are pretending they're a shining city sitting on a hill but actually what they have is a facade.”

Methods and sources

A successful disinformation campaign, then and now, requires three elements, said Watts. The first is a state-sponsored news outlet to provide a baseline of communications, such as RT (Russia Today) or Sputnik.

The second are alternative media sources willing to run stories that fall short of traditional fact-checking standards.

The third are covert personas and agents of influence who will help advance stories into the media, either as trolls, front organizations and cutouts, or compromised or willing “fellow travelers.”

“That essentially is a spectrum,” said Watts. “It's from overt, what they're very clearly saying that they support, to covert, that which they plausibly deny to have any involvement with.”

Techniques include forging documents, faking or engineering compromising materials, and undeclared front groups to help advance storylines into the media stream.

A preferred tactic was then to seed a story in a newspaper that was susceptible to Russian influence, because it was run by the KGB, a journalist was paid off, or intermediaries were sympathetic to the Russian cause. Once there, it could take off worldwide.

In the 80’s the Russians planted a story in an Indian newspaper that the U.S. had invented AIDS, a KGB disinformation campaign known as "Operation Infektion," according to reports, former intelligence officers, and notes taken on the intelligence agency’s documents by a defector and former KGB archivist, Vasili Mitrokhin, reported on by intelligence historian Christopher Andrew in his book, “The Sword and the Shield.”

It was later picked up by a Russian magazine and TASS, the Russian state-owned news service.

From there it went worldwide, appearing in hundreds of newspapers, and, in 1987, anchor Dan Rather read a news item on it on the CBS Evening News.

The entire basis was the printing of a purported anonymous letter to the editor of the Indian paper. Later, a doctor with a Russian connection appeared, offering various caveated explanations that suggested details for how the virus may have been created in a U.S. government laboratory, based on some shaky suppositions and using lots of qualifiers.

In the modern era, a bogus story may get its start on 4chan, the extreme message board, or fringe areas of Twitter before being picked up by partisans with more followers and start making its way up the social media and media food chain. In some cases it ends up getting rehashed by Fox News or even making its way in some form to President Trump's tweets and speeches.

In many cases, the Russians didn’t invent the story but they found one already out there, selectively reframing it to highlight the worst parts and mitigating any qualifying information, and promoting it.

In 1986, a Honduran adoption official relayed to reporters how his workers were hearing the false local legend of children being adopted to be butchered and used for organ transplant, according to a 1994 State Department report authored by Leventhal. But the Honduran official’s phrasing made it appear as if the story was true and it was reported in local newspapers. He forcefully denied it and issued corrective statements and it disappeared from local press.

Months later, Soviet newspapers picked it up, stripping out the denial, and repeated it as fact, according to the Mitrokhin archive. Versions of it went on to appear in hundreds of newspapers worldwide, along with new details.

The hoaxes are almost impossible to eradicate. Versions of the “baby parts” story, with no ties to Russia, have driven mob lynchings in Mexico, India and other places, preceded by false reports circulating virally on WhatsApp and other messaging platforms.

During the 2016 election attack campaign by Russia, the same story appeared on a Tumblr page controlled by the Internet Research Agency, a Kremlin-linked online disinformation firm.

The page posted a meme to Tumblr featuring the faces of smiling black children next to a Jet magazine cover story on 800,000 missing black children. "Look up black organ harvesting," the post read. "Some one or some thing is stealing and consuming our children."

It was the same story from Honduras in the 80’s, recycled by the Russia and given a new twist to stoke modern racial divisions.

Killer lies

Disinformation can also have serious repercussions.

The "baby parts" story led to foreign countries making it harder for American parents to adopt children. In May of 1991, Turkey suspended intercountry adoptions because of the rumor, the State Department reported. In Bulgaria, at one point adoptive parents had to certify they would not allow their adopted children to be an organ donor or be a part of medical experiments, archival adoption papers show.

It also spurred several documented physical attacks on American travelers. The AIDS story undercut support for America in target countries, according to a 1987 State Department report, and fueled hysteria during a time when HIV positive patients were dying who could have benefited from clear-headed allocation of funds for research and treatment.

“The core concept is erosion of trust,” said Renee DiResta, director of research at New Knowledge, an organization that studies disinformation campaigns.

“If I’m constantly living in a state of heightened suspicion, that really breaks down relationships between people,” DiResta said. “You assume that everyone is acting in bad faith and it’s hard to have a conversation. You assume that your media is lying to you then it’s hard to know what to trust.”

“And when you look at where we are as a society today,” she added, “I would argue that they knew what to target very effectively.”

The other goal, experts say, is to weaken America in a zero-sum game of geopolitics and force the country to accept Russia on its own terms.

"Russia wants to reach an equilibrium," said William Wohlforth a professor of government at Dartmouth College. "They want to have a dialogue about cyberspace, have us to lay off them, and reach a deal where we accept a moral equivalence between our information space getting into theirs and ours into theirs."

"Most Americans would not want to make that deal that equates authoritarian regimes with democracy," he said.

America's technologies, free speech, privacy protections and regulatory approach have made attacking that democracy easier than ever.

"Twenty years ago the Russians had to recruit journalists to find people to disseminate something," said John Schindler, a former NSA analyst.

"Nowadays they just have to start a meme."